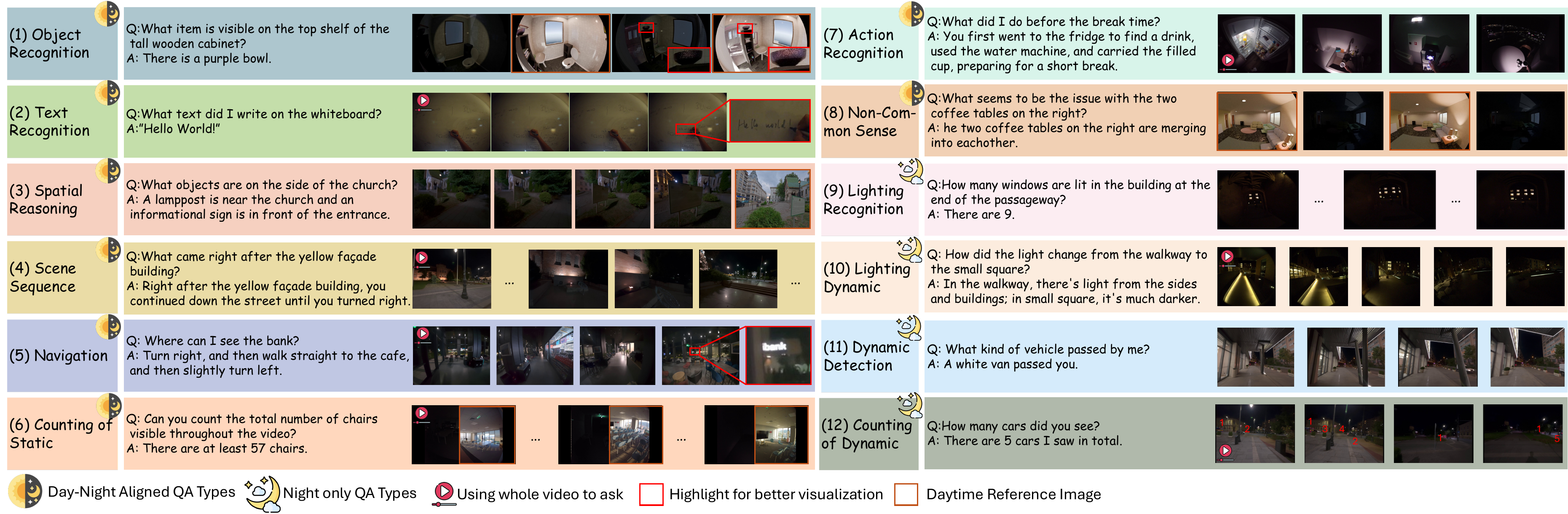

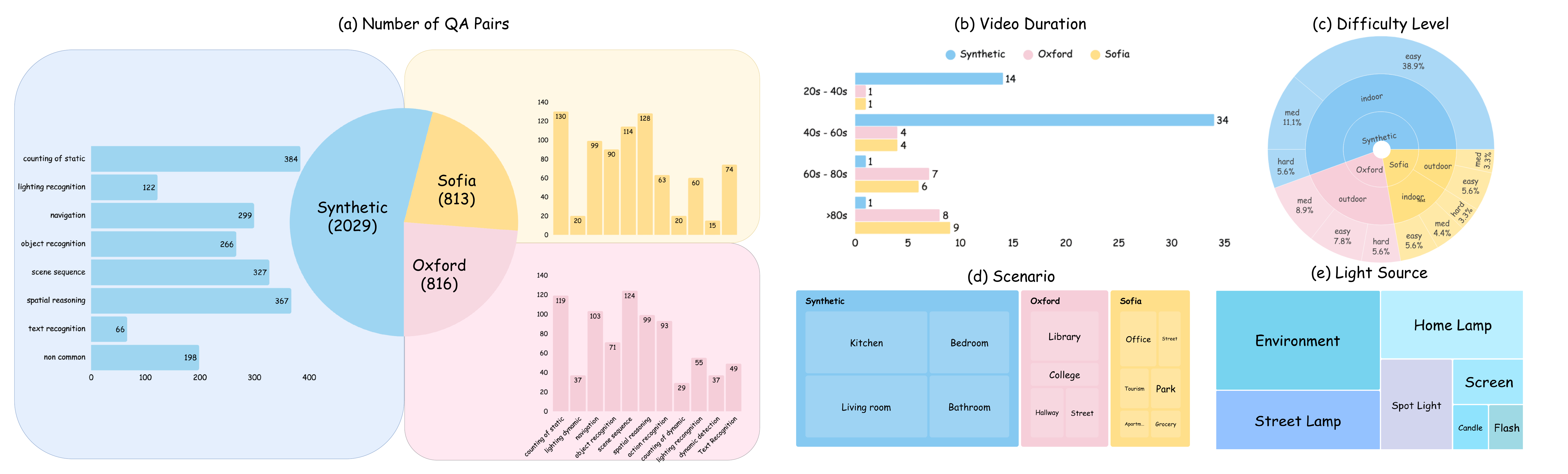

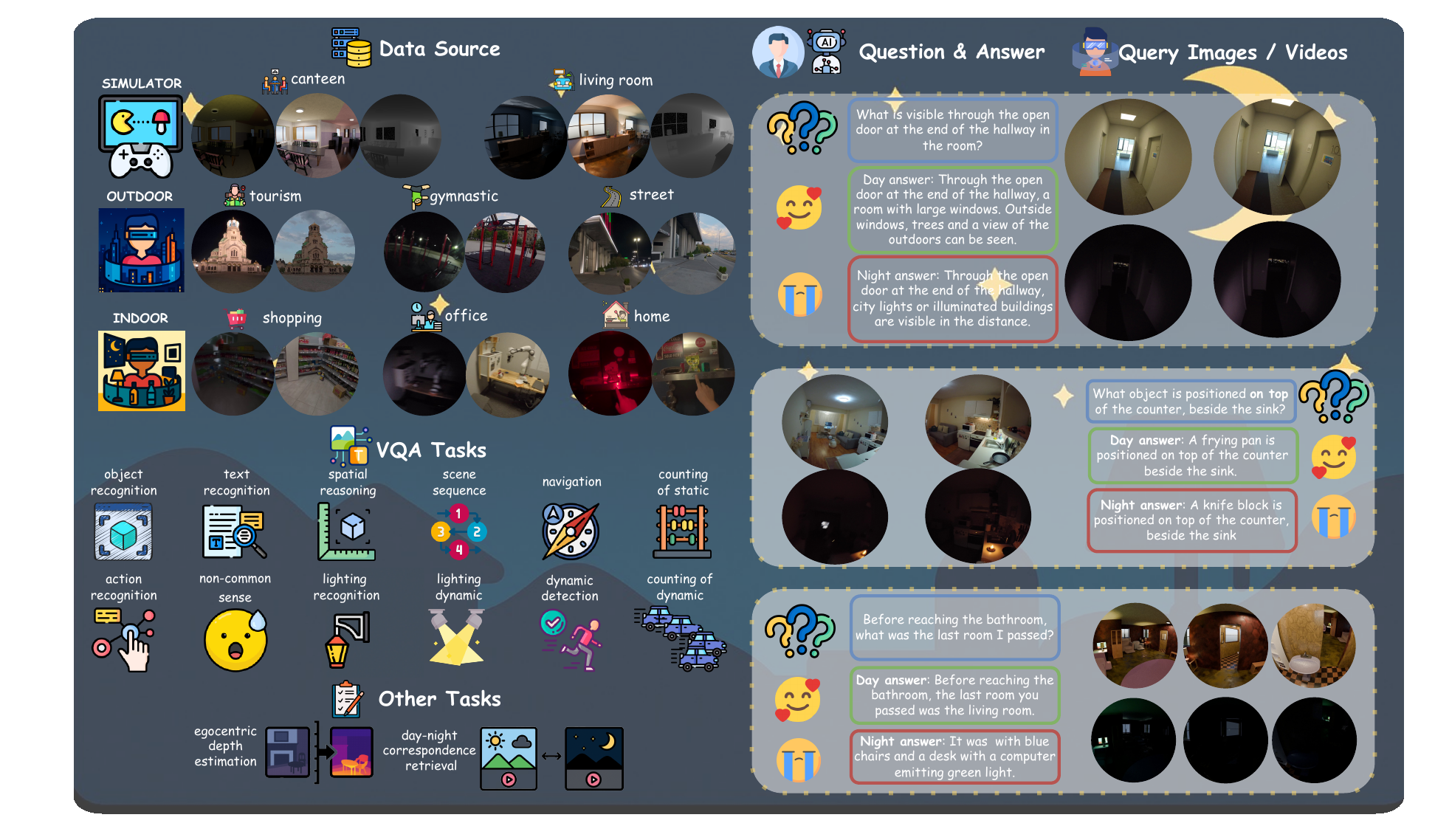

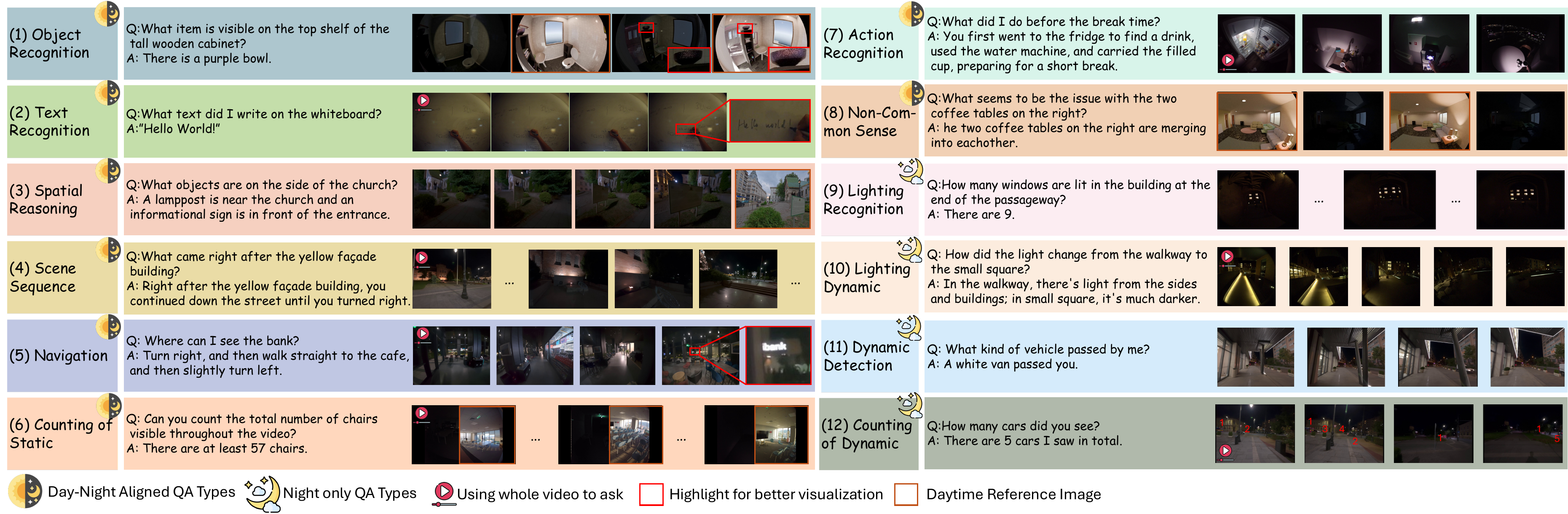

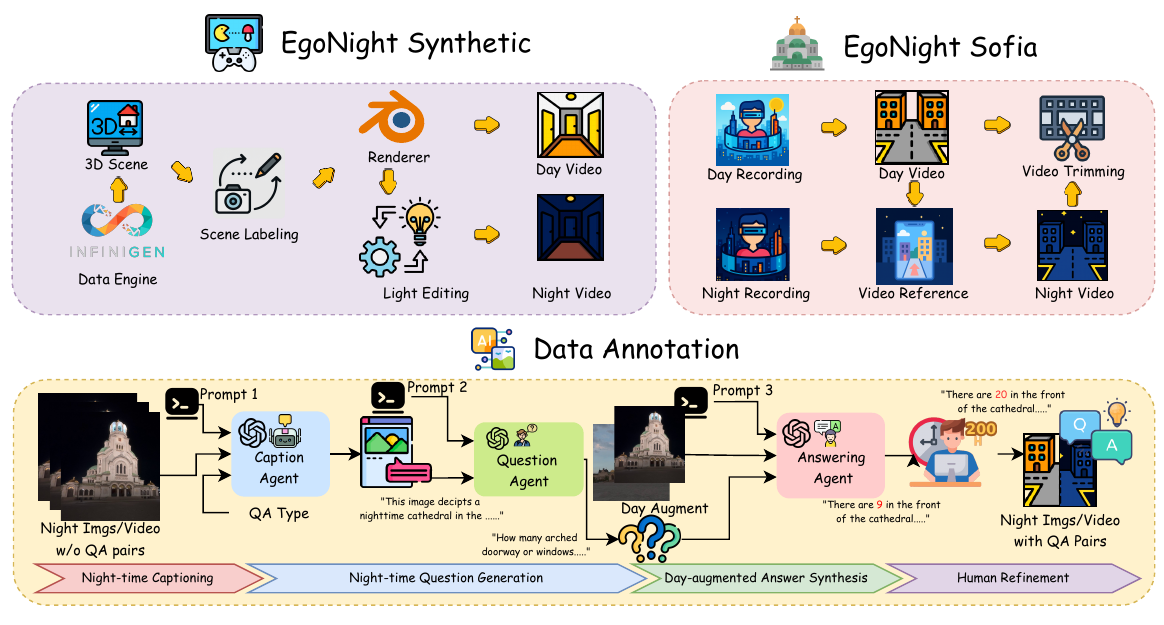

Dataset Design

EgoNight combines aligned synthetic and real-world sources with diverse scenes, illumination types, and easy/medium/hard difficulty levels.

Synthetic: 50 aligned pairs

Synthetic: 50 aligned pairs Sofia: 20 aligned pairs

Sofia: 20 aligned pairs Oxford: 20 night videos

Oxford: 20 night videos

1 INSAIT, Sofia University 2 East China Normal University 3 HKUST(GZ) 4 Nankai University 5 Fudan University

ICLR 2026

ICLR 2026

EgoNight is the first benchmark suite for nighttime egocentric vision, centered on VQA and extended with retrieval and depth estimation.

EgoNight combines aligned synthetic and real-world sources with diverse scenes, illumination types, and easy/medium/hard difficulty levels.

Synthetic: 50 aligned pairs

Synthetic: 50 aligned pairs Sofia: 20 aligned pairs

Sofia: 20 aligned pairs Oxford: 20 night videos

Oxford: 20 night videos

All 3,658 QA pairs are manually verified. Total annotation effort: 300+ hours.

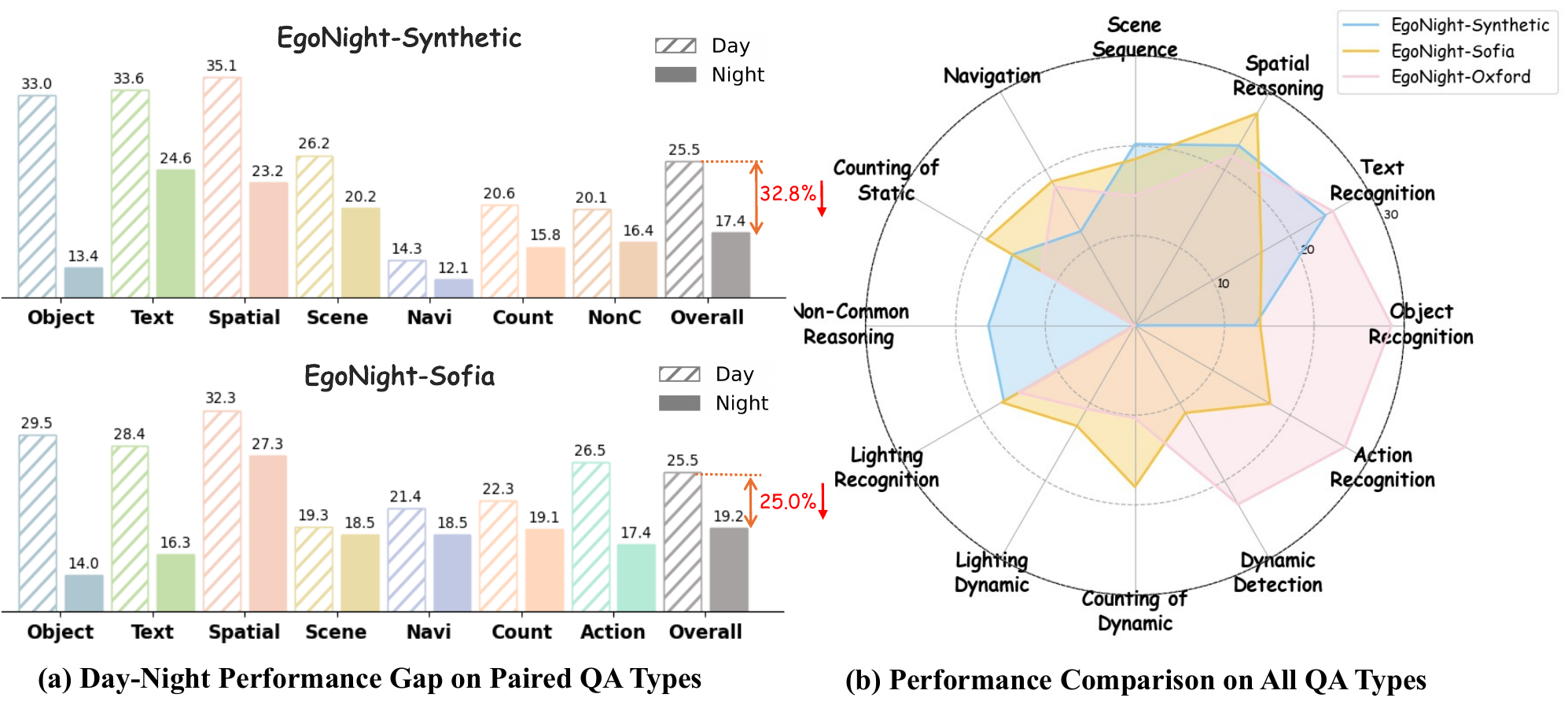

Even top MLLMs struggle under nighttime egocentric settings.

| Category | Best model | Avg. Acc (%) |

|---|---|---|

| Closed-source | 30.93 | |

| Open-source |  InternVL3-8B InternVL3-8B |

20.06 |

| Egocentric |  EgoGPT EgoGPT |

14.29 |

| Model | Spatial N->D (Syn/Sofia) | Temporal N->D (Syn/Sofia) |

|---|---|---|

| GPT-4.1 | 54.1 / 84.5 | 10.0 / 15.5 |

| Percep. Enc. | 41.6 / 80.9 | 32.9 / 33.4 |

| DINOv2 | 28.7 / 74.5 | 33.7 / 33.1 |

| InternVL3-8B | 27.7 / 56.3 | 9.9 / 13.3 |

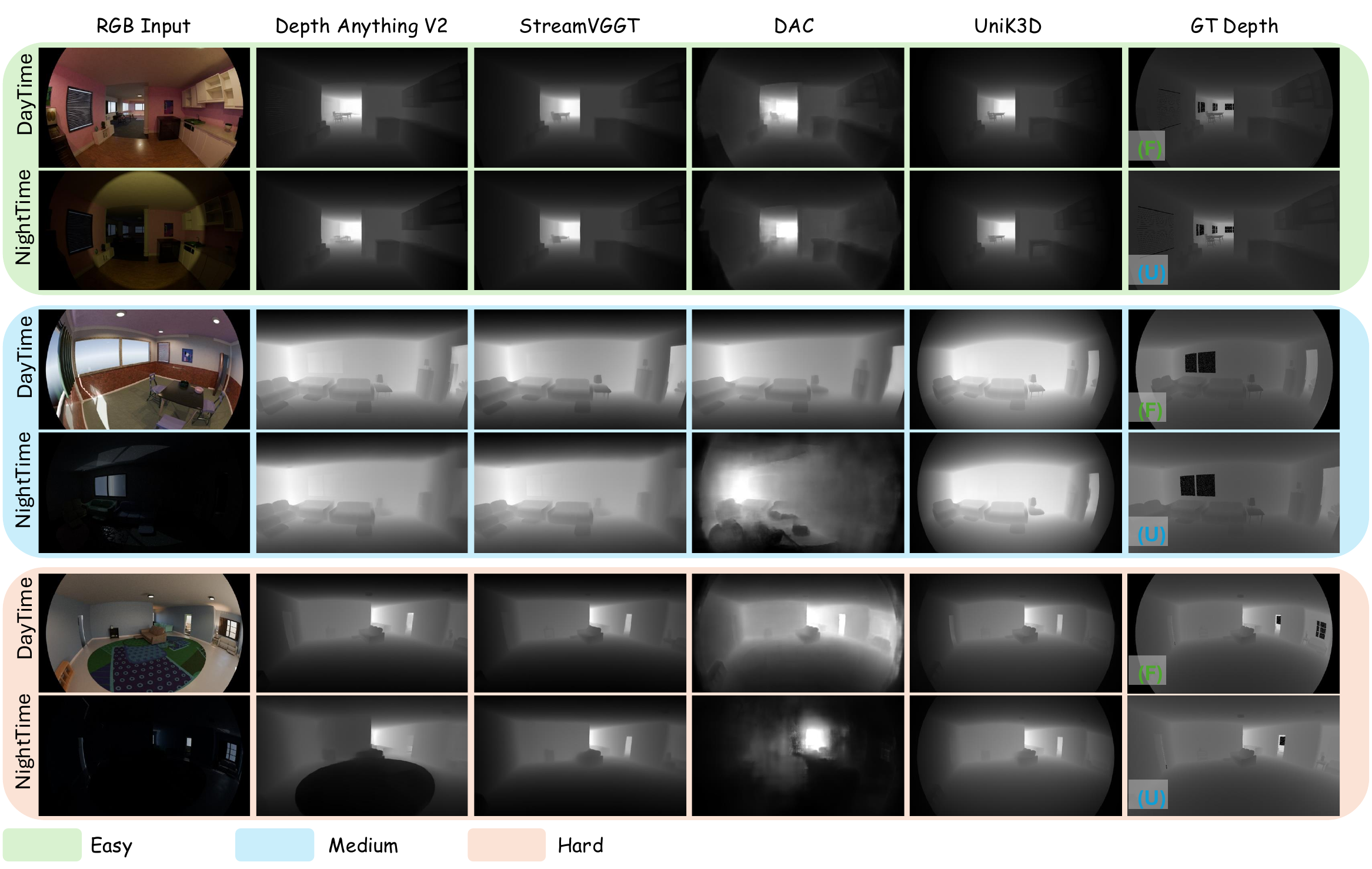

| Method | AbsRel D/N | delta1 D/N |

|---|---|---|

| Depth Anything | 0.297 / 0.302 | 0.249 / 0.237 |

| VGGTStream | 0.293 / 0.298 | 0.234 / 0.232 |

| DAC | 0.245 / 0.292 | 0.255 / 0.216 |

| UniK3D | 0.224 / 0.253 | 0.280 / 0.254 |

Copyright (c) 2026 EgoNight authors. All rights reserved.